How to Query Semantics to Find Real-World Objects

This how-to covers:

- Querying semantics to detect what is on-screen at a point the player touches;

- Highlighting a specific semantic channel, both visually and in text;

- Available APIs for querying semantic information.

Prerequisites:

You will need a Unity project with ARDK installed and a set-up basic AR scene. For more information, see Installing ARDK 3 and Setting up an AR Scene.

Adding UI Elements

Before implementing the script that handles semantic querying, we need to prepare the UI elements that will display semantic information to the user. For this example, we will create a text field to display the semantic channel name and a RawImage to handle shader output.

To create the UI elements:

- In the Hierarchy, right-click in your AR scene, then mouse over UI and select Text-TextMeshPro to add a text field.

- Repeat this process, but select Raw Image instead of Text-TextMeshPro to add a

RawImageto the scene. - Select the

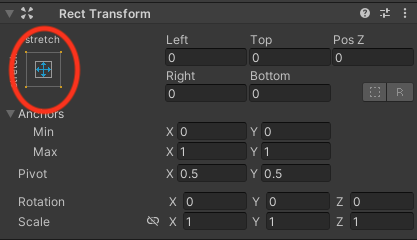

RawImage, then, in the Inspector window, click the square in the top-left corner of the Rect Transform menu, as shown here:

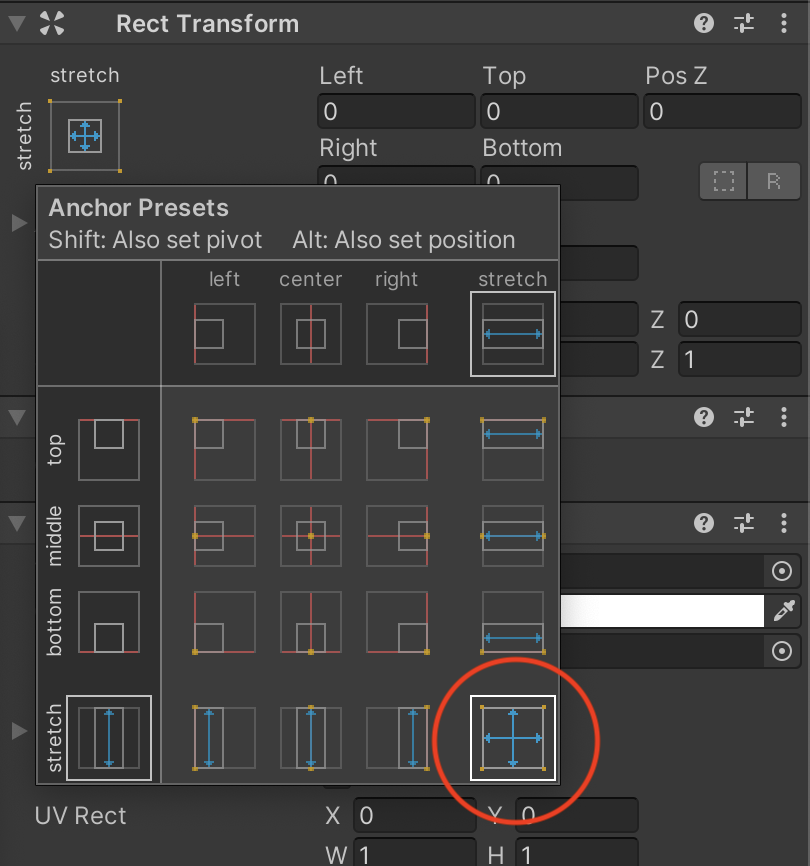

- In the Anchor Presets menu that opens, hold the Option key (Alt on Windows) and select the bottom-right square. This will place the

RawImagein the correct spot and stretch it so that it covers the entire screen.

Adding the Semantic Segmentation Manager

The Semantic Segmentation Manager provides access to the Semantics subsystem and serves semantic predictions that other parts of your code can access. (For more detailed information, see the Semantics Features page.)

To add a Semantic Segmentation Manager to your scene:

- Right-click in the Hierarchy window, then select Create Empty to add an empty

GameObjectto the scene. Name it Segmentation Manager. - Select the new

GameObject, then, in the Inspector window, click Add Component, search for "AR Semantic Segmentation Manager", and select it to add it as a Component.

Adding the Alignment Shader

To make sure our semantic information aligns properly on-screen, we need to use the display matrix and rotate the buffer returned by the camera. Using an overlay shader, we can get the display transform from the ARCameraManager frame update event and use it to transform the UVs into the correct screen space. Then, we render the semantic information into the RawImage we made earlier and display it to the user.

To create the shader:

- Create a shader and material:

- In the Project window, open the Assets directory.

- Right-click in the Assets directory, then mouse over Create and select Unlit Shader from the Shader menu. Name it SemanticShader.

- Repeat this process, but select Material from the Create menu to create a new material. Name it SemanticMaterial, then drag and drop SemanticShader onto it to associate them.

- Add the shader code:

- Select SemanticShader from the Assets directory, then, in the Inspector window, click Open to edit the shader code.

- Replace the default shader with the alignment shader code.

Click to expand the alignment shader code

Shader "Unlit/SemanticShader"

{

Properties

{

_MainTex ("_MainTex", 2D) = "white" {}

_SemanticTex ("_SemanticTex", 2D) = "red" {}

_Color ("_Color", Color) = (1,1,1,1)

}

SubShader

{

Tags {"Queue"="Transparent" "IgnoreProjector"="True" "RenderType"="Transparent"}

Blend SrcAlpha OneMinusSrcAlpha

// No culling or depth

Cull Off ZWrite Off ZTest Always

Pass

{

CGPROGRAM

#pragma vertex vert

#pragma fragment frag

#include "UnityCG.cginc"

struct appdata

{

float4 vertex : POSITION;

float2 uv : TEXCOORD0;

};

struct v2f

{

float2 uv : TEXCOORD0;

float3 texcoord : TEXCOORD1;

float4 vertex : SV_POSITION;

};

float4x4 _SemanticMat;

v2f vert (appdata v)

{

v2f o;

o.vertex = UnityObjectToClipPos(v.vertex);

o.uv = v.uv;

//we need to adjust our image to the correct rotation and aspect.

o.texcoord = mul(_SemanticMat, float4(v.uv, 1.0f, 1.0f)).xyz;

return o;

}

sampler2D _MainTex;

sampler2D _SemanticTex;

fixed4 _Color;

fixed4 frag (v2f i) : SV_Target

{

//convert coordinate space

float2 semanticUV = float2(i.texcoord.x / i.texcoord.z, i.texcoord.y / i.texcoord.z);

float4 semanticCol = tex2D(_SemanticTex, semanticUV);

return float4(_Color.r,_Color.g,_Color.b,semanticCol.r*_Color.a);

}

ENDCG

}

}

}

- Set up the material properties:

- Once you replace the shader code, the material will populate with properties. To access them, select SemanticMaterial from the Assets directory, then look in the Inspector window.

- Click the color swatch to the right of the Color property in the Inspector to set the material color and alpha. Set the alpha value to

128(roughly 50%) to make sure the semantic color filter is translucent enough to see the real-world object beneath it. The color is up to you!

Creating the Query Script

To get semantic information when the player touches the screen, we need a script that queries the Semantic Segmentation Manager and displays the information when the player touches an area.

To create the query script:

- Make the script file and add it to the Segmentation Manager:

- In the Project window, select the Assets directory, then right-click inside the window, mouse over Create, and select C# Script. Name the new script SemanticQuerying.

- In the Hierarchy, select the Segmentation Manager

GameObject, then, in the Inspector window, click Add Component. Search for "script", then select New Script and choose the SemanticQuerying script.

- Add code to the script:

- Double-click the SemanticQuerying script in the Assets directory to open it in a text editor, then copy the following script into it. (See the Appendix for details on how each part of the script works.)

Click to reveal the SemanticQuerying script

using Niantic.Lightship.AR.Semantics;

using TMPro;

using UnityEngine;

using UnityEngine.UI;

using UnityEngine.XR.ARFoundation;

public class SemanticQuerying : MonoBehaviour

{

public ARCameraManager _cameraMan;

public ARSemanticSegmentationManager _semanticMan;

public TMP_Text _text;

public RawImage _image;

public Material _material;

private string _channel = "ground";

void OnEnable()

{

_cameraMan.frameReceived += OnCameraFrameUpdate;

}

private void OnDisable()

{

_cameraMan.frameReceived -= OnCameraFrameUpdate;

}

private void OnCameraFrameUpdate(ARCameraFrameEventArgs args)

{

if (!_semanticMan.subsystem.running)

{

return;

}

//get the semantic texture

Matrix4x4 mat = Matrix4x4.identity;

var texture = _semanticMan.GetSemanticChannelTexture(_channel, out mat);

if (texture)

{

//the texture needs to be aligned to the screen so get the display matrix

//and use a shader that will rotate/scale things.

Matrix4x4 cameraMatrix = args.displayMatrix ?? Matrix4x4.identity;

_image.material = _material;

_image.material.SetTexture("_SemanticTex", texture);

_image.material.SetMatrix("_SemanticMat", mat);

}

}

private float _timer = 0.0f;

void Update()

{

if (!_semanticMan.subsystem.running)

{

return;

}

#if UNITY_EDITOR

if (Input.GetMouseButtonDown(0))

{

var pos = Input.mousePosition;

}

#else

if (Input.touches.Length > 0)

{

var pos = Input.touches[0].position;

}

#endif

if (pos.x > 0 && pos.x < Screen.width)

{

if (pos.y > 0 && pos.y < Screen.height)

{

_timer += Time.deltaTime;

if (_timer > 0.05f)

{

var list = _semanticMan.GetChannelNamesAt((int)pos.x, (int)pos.y);

if (list.Count > 0)

{

_channel = list[0];

_text.text = _channel;

}

else

{

_text.text = "?";

}

_timer = 0.0f;

}

}

}

}

}

Assigning Script Variables

Before the script can run, we need to assign its variables in Unity so that the script and UI elements can talk to each other.

To assign the variables:

- In the Hierarchy, select the Segmentation Manager

GameObject. - In the Inspector window, assign the variables in the

SemanticQueryingscript Component by dragging and dropping each item to its respective field:- The scene's

MainCamerato the Camera Man field; - The

SegmentationManagerobject to the Segmentation Man field; - The

Text-TMPobject to the Text field; - The

RawImageobject to the Image field; SemanticMaterialfrom the Assets directory to the Material field.

- The scene's

Build and Test

Once you have added the script and populated it with code, build to your device and test. Your output should look something like this:

Appendix: How Does the Query Script Work?

What's On The Screen?

The core of the querying script checks the center of the screen on every frame. We pass (Screen.width/2, Screen.height/2) to ARSemanticSegmentationManager.GetChannelNamesAt to define the query target as the center point, then pass the output to the UI text element.

Click here to reveal the channel names snippet

using Niantic.Lightship.AR.ARFoundation;

using TMPro;

using UnityEngine;

public class SemanticQuerying : MonoBehaviour

{

public ARSemanticSegmentationManager _semanticMan;

public TMP_Text _text;

void Update()

{

if (!_semanticMan.subsystem.running)

{

return;

}

var list = _semanticMan.GetChannelNamesAt(Screen.width / 2, Screen.height / 2);

_text.text="";

foreach (var i in list)

_text.text += i;

}

}

Highlighting the Semantic Class

Using GetSemanticChannelTexture, we can get the texture for the semantic channel we're looking at and output the results to the RawImage UI element. Because this function outputs a Matrix4x4 texture, we then transform it in the shader, as explained in Adding the Alignment Shader.

Click to reveal the texture output snippet

using Niantic.Lightship.AR.ARFoundation;

using TMPro;

using UnityEngine;

public class SemanticQuerying : MonoBehaviour

{

public ARSemanticSegmentationManager _semanticMan;

public TMP_Text _text;

public RawImage _image;

void Update()

{

if (!_semanticMan.subsystem.running)

{

return;

}

var list = _semanticMan.GetChannelNamesAt(Screen.width / 2, Screen.height / 2);

_text.text="";

foreach (var i in list)

_text.text += i;

//this will highlight the class it found

if (list.Count > 0)

{

//just show the first one.

_image.texture = _semanticMan.GetSemanticChannelTexture(list[0], out mat);

}

}

}