Sample Projects

| Lightship samples are designed to demonstrate the uses of each feature in our SDK. The sample project has launches multiple small samples that you can try out and look though the code to learn how to get started with any feature. You can follow our How-tos to get a step by step guide to levereaging each feature. In addition to the samples project, we also offer standalone mini game examples that show how to combine multiple features into a more complete AR Experience. Emoji Garden it the first of these to be released you can inspect this to learn about best practices for creating persistant Shared AR experiences! Head to the Emoji Garden feature page to learn more about it and download the project. |

Installing the Samples

The samples are available on our github https://github.com/niantic-lightship/ardk-samples

How to clone/download the samples

git clone https://github.com/niantic-lightship/ardk-samples.git

or

Download the repo from https://github.com/niantic-lightship/ardk-samples using the code/download button on github.

Open the samples project in Unity by pressing Add in Unity Hub and browsing to the project.

You will also need to add a Lightship key to have all samples work correctly.

Running the Samples in Unity 2022

By default, our sample projects run on Unity 2021.3.29f1, but you can update them to version 2022.3.37f1 if you would prefer to use Unity 2022.

To update the samples to Unity 2022:

- In Unity Hub, under Installs, install 2022.3.37f1 if you do not have it already.

- Under Projects, find the ARDK sample project. Click on the Editor Version and change it to 2022.3.37f1. Then click the Open with 2022.3.37f1 button.

- When the Change Editor version? dialog comes up, click Change Version.

- When the Opening Project in Non-Matching Editor Installation dialog comes up, click Continue.

- Disable the custom base Gradle template:

- In the Unity top menu, click Edit, then Project Settings.

- In the left-hand Project Settings menu, select Player, then click the Android tab.

- Scroll down to Publishing Settings, then un-check the box labeled Custom Base Gradle Template.

- In the Window top menu, open the Package Manager. Select Visual Scripting from the package list, then, if you are using version 1.9.0 or earlier, click the Update button.

- If there are any errors, the Enter Safe Mode? dialog will pop up. Click Enter Safe Mode to fix the errors.

Installing Head-Mounted Display Samples

The Lightship Magic Leap 2 integration is in beta, so some features may not work as expected.

The Lightship Magic Leap Plugin includes sample scenes designed for use with Magic Leap 2 in AR.

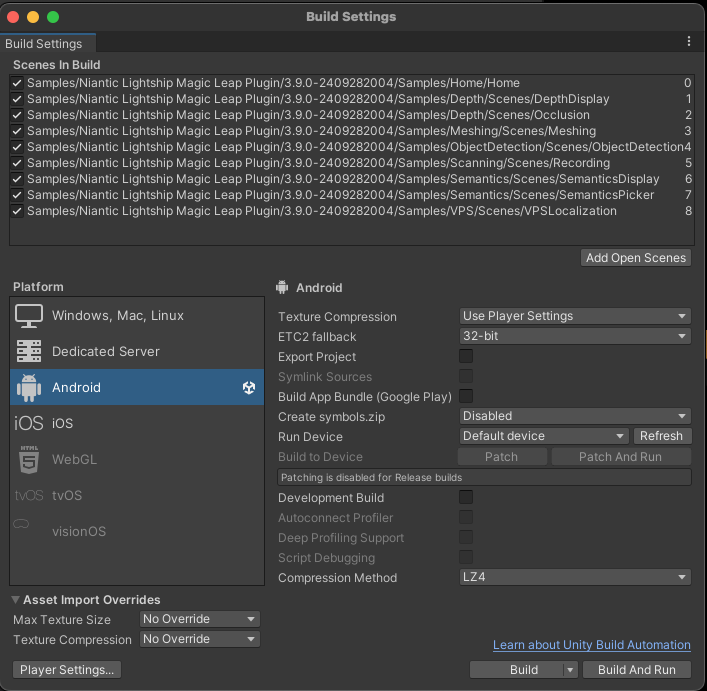

To install the Magic Leap 2 samples:

- Follow the steps to install Lightship for Magic Leap 2.

- In Unity, open the Window top menu, then select Package Manager. Ensure that Packages: In Project is selected.

- Under Niantic Lightship Magic Leap Plugin, select the Samples tab.

- Click Import to import the samples into your current project.

- Find the sample scenes under

Assets/Samples/Niantic Lightship Magic Leap Plugin/. - In the File top menu, select Build Settings.

- Drag the sample scenes that you wish to test into Scenes in Build.

- Ensure that the Home scene is at the top of the list. Build and Run when the Magic Leap 2 device is connected and awake to test out the samples.

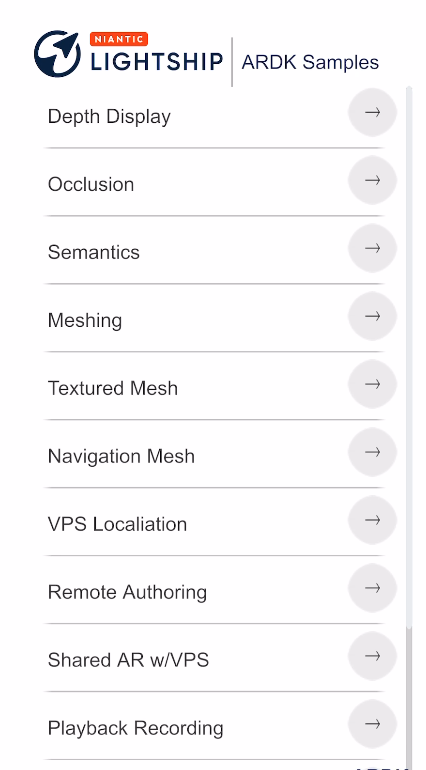

Samples

Depth Display

| The depth scene demonstrates how to get the depth buffer and display it as an overlay in the scene. Open DepthDisplay.unity in the Depth folder to try it out. |

Occlusion

| This scene demonstrates occlusion by moving a static cube in front of the camera. Because the cube does not move, you can walk around and inspect the occlusion quality directly. To open it, see Occlusion.unity in the Depth folder. This sample also demonstrates two advanced occlusion options available in Lightship, Occlusion Suppression and Occlusion Stabilization. These options reduce flicker and improve the visual quality of occlusions using input from either semantics or meshing. For more information on how these capabilities work, see the How-To sections for Occlusion Suppression and Occlusion Stabilization. |

Semantics

| This scene demonstrates semantics by applying a shader that colors anything recognized on-screen as part of a semantic channel. To open this sample, see SemanticsDisplay.unity in the Semantics folder. To use this sample:

|

Object Detection

| This scene demonstrates object detection by drawing a 2d bounding box around any detections it finds. In the settings menu you can toggle showing all detected classes vs only showing a selected class from the provided drop down. To open this sample, see ObjectDetection.unity in the ObjectDetection folder. |

Meshing

| This scene demonstrates how to use meshing to generate a physics mesh in your scene. It shows the mesh using a Normal shader, the colors represent Up, Right and Forward. To open this sample, see NormalMeshes.unity in the Meshing folder. |

Textured Mesh

| This scene demonstrates how to texture a Lightship mesh. It works like the Meshing sample but uses an example tri-planar shader that demonstrates one way to do world space UV projection. The sample tiles three textures in the scene; one for the ground, the walls, and the ceiling. To open this sample, see TexturedMesh.unity in the Meshing folder. |

Navigation Mesh

| This scene demonstrates using meshing to create a Navigation Mesh. As you move around we create and grow a navigation mesh that you can click on to tell an AI agent to move to that position. The agent can walk around corners and jump up on objects. To open the sample, see NavigationMesh.unity in the NavigationMesh folder. To view this demonstration:

|

Remote Authoring

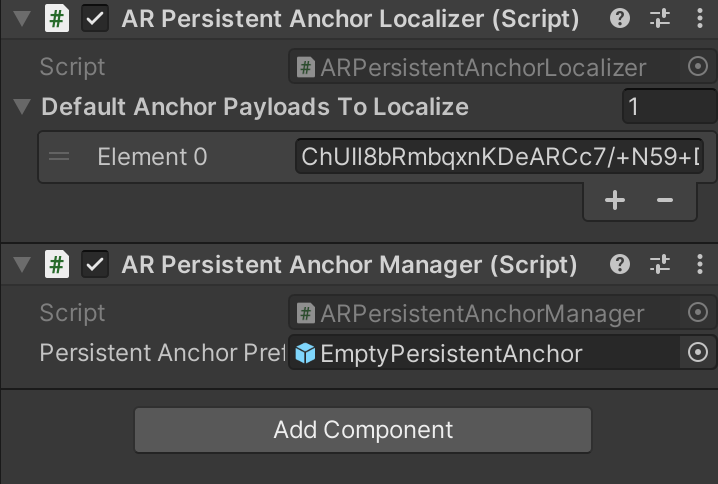

| note This sample only works in portrait orientation. This scene demonstrates target localization by targeting a VPS Anchor. To open this sample, see RemoteAuthoring.unity in the PersistentAR folder. To use this sample:

Changing the Blob at RuntimeYou can open the Geospatial Browser on your test device, copy the Blob of a different anchor, and paste it into the Payload text box when the app is running. |

VPS Localization

| Attention This sample requires a Lightship API Key. This scene shows a list of VPS locations in a radius, allows you to choose a Waypoint from the Coverage API as a localization target, then interfaces with your phone's map to guide you to it. To open this sample, see VPSLocalization.unity in the PersistentAR folder. To use this sample:

|

Shared AR VPS

| Attention This sample requires a Lightship API Key. This scene allows you to choose a Waypoint from the Coverage API and create a shared AR experience around it. To open this sample, see SharedARVPS.unity in the SharedAR folder. To use this sample on mobile devices:

To use this sample with Playback in the Unity editor:

|

Shared AR Image Tracking Colocalization

| Attention This sample requires a Lightship API Key. This scene allows multiple users to join a shared room without a VPS location, using a static image as the origin point. To open this sample, see ImageTrackingColocalization.unity in the SharedAR folder. To use this sample:

|

World Pose

| This sample demonstrates how the World Positioning System improves the camera's accuracy by showing a comparison between the device's GPS compass and the World Pose compass. As you walk around, the World Pose compass should stabilize and become more accurate over time. |

Recording

| This scene allows you to scan a real-world location for playback in your editor. To open this sample, see Recording.unity in the Scanning folder. To learn how to use this sample, see How to Create Datasets for Playback. |